etherSound is a work for computer and an unlimited number of participants and was commissioned in 2002 by curator Miya Yoshida for her project The Invisible Landscapes. It was realized for the first time in August 2003 at Malmö Art Museum in Malmö, Sweden, and since then it has been performed on several occasions in Sweden, Denmark, UK and the USA.

The principal idea behind etherSound is to create a vehicle for audience collaboration in the shape of a virtual instrument that is playable by anyone who can send SMS messages from their mobile phones.

In the classical European tradition the production of music has followed a strict hierarchy with the composer at the top and the receiving listener at the bottom, much as an unnecessary by-product of the otherwise autonomous composition procedure. etherSound is an effort to counteract this tendency and instead move the initiative of making and distributing sounds from the composer/musician to the listener.

As a work it can take on many different shapes (a performance environment, sound installation, composition tool, etc.) but of all versions produced, on the recording released in 2009 on Kopasetic Productions, it received its hitherto perhaps most detached and abstract representation, in that the recording introduced a distance between the performers, the listener, and the collaborators.

As an interactive system etherSound takes input in the form of SMS messages from which it creates ‘message-scores’, then transformed to short electro-acoustic ‘message-compositions’. Each message received by the system gives rise to a sound event, a ‘message-composition’, that lasts for 15 seconds up to 2 minutes. The length of the event depends on the relative length and complexity of the message text.

Hence a short message will be more likely to generate a shorter message-composition than a long message, but a short message with a couple of words with inter-punctuation may render a longer message-composition than a long message containing only gibberish.

But also the message-composition’s inherent sonic complexity may be increased by a more complex message.

In a performance the electronic part is the result of received SMS messages supplied by members of the audience or by any other contributor (the SMS may be sent from anywhere).

In the aforementioned recording a number of messages received in a performance in Malmö in 2004 have been used ‘offline’, so to speak, in order to generate an electronic track that I and drummer Peter Nilsson play along with. In this context, since the messages were not used in real time, a distance was introduced between us, as performers, and the contributors.

At the same time, however, the electronic track also creates a virtual connection between us and the audience of the 2004 concert.

As the relative timing of the over one hundred messages was kept intact on the recording, the electronics serves as a mirror image in time of the concert. In essence, those who participated in the 2004 concert in a sense also participates on the recording, although in absence.

While recording the CD, the electronic track became like a third player to me and Peter. Despite its static nature, it appeared as a quite dynamic agent that mediated the virtual presence of the concert participants.

This presence made it necessary for us to engage in discussions concerning our musical relation to these distant performers: in another setting we might have chosen strategies that would allow for a more free approach but in this case we had to respect the absent players (or there would be no point in doing the record in this way) at the expense of our own freedom.

We had to give up ourselves (our egos) in order to allow place for the collective. The collective introduced the authenticity against which we had to weigh our decisions.

A recording is a simulation of a musical performance. The deception is in most cases very successful and we happily lean back in our living room chair, on the bus, or wherever we are, and imagine the virtual concert taking place.

In the case of improvised music the recording is a double deception because not only is it in reality not a concert, it has also become less of an improvisation and more of a work; a fixed entity against which future performances may be measured. On a recording the elusive nature of improvisation is, to a certain extent, lost.

In some cases I would be inclined to say that a recording is a work kind of its own, only vaguely related to the music it simulates.

The recording of etherSound is a twofold simulation: It is a simulation of the performance of etherSound, but it is also the simulation of interaction between an unnamed collective force and two musicians—just as etherSound already in its earliest conceptions was a simulation: a simulation of mobile phones playing music.

*

We are constantly surrounded by technology. Technology to help us communicate, to travel, to pay our bills, to listen to music, to entertain us, to create excitement in our mundane lives.

For the great part, most users are blissfully unaware of what is going on inside the machines that produce the tools we use (the machine itself is usually much more than the tool). There is no way to experientially comprehend it—it is an abstract machine (though not so much in the Deleuzian sense).

If a hammer breaks we may reconstruct it based on our experiences from using it but if a computer program breaks the knowledge we have gained from using it is not necessarily useful when, and if, we attempt at mending it. This phenomenon is not (only) tied to the complexity of the machine but is (also) a result of the type of processes the machine initiates and the abstract generality in the technology that implements the tool.

(The abstract Turing machine, the mother of all computers, is generally thought to be able to solve all logical problems.)

In the end, we may think that we have grown dependent on the technologies we use, and that our social and professional (perhaps even private) lives would be dysfunctional without it.

Though it may be true that, to some degree, we are dependent on technology, technology, to a much higher degree, is dependent on us.

Even more so, for the most part, it would be utterly useless without us.

Most technology is still “dumb” in the sense that it cannot act on itself. It has no intention to do anything unless we tell it to perform some task or take some action, in which case it will fulfill our requests with no worries about the consequences of the act performed.

Furthermore, it generally perceives the world through only a few channels and, if it can be said to “want to fulfill” its task, it “wants” to do it no matter how its environment changes. A chess playing program will continue to play chess even if the world around it is about to fall apart.

Obviously, this may be seen as a great strength, in particular to the military who developed a lot of the technologies in use today. The soldier that does not worry about his own personal safety or well-being but pursues his mission regardless of what goes on around him must be the wet dream of any organization engaged in warfare.

But in any other context it is difficult to think of such a strategy as intelligent.

I sympathise (but I don’t necessarily agree) with computer scientist and artist Jaron Lanier who, in the mid 90’s opposed to the idea of ‘intelligent agents’ arguing that “if an agent seems smart, it might really mean that people have dumbed themselves down to make their lives more easily representable by their agents’ simple database design”. (Lanier, 1996)

Were it true—that we make ourselves dumber than we are just to make our technology seem intelligent—no matter the objectives, that would be a terrible abomination. After all, until we have designed and implemented evolutionary algorithms that can let the machine evolve by itself, it can not be smarter than its creator—it can be better or faster at some specific task—but not more intelligent in a general way.

In that sense Lanier is right. However, intelligence is not so simple as we can make a binary distinction between “smart” and “dumb”. Fact is, that in itself would be to “dumb ourselves down”.

My concerns here are of a somewhat different order than the thoughts put forward by Lanier.

The political as well as personal impact technology, and in particular information technology, has on our lives should not be understated, but neither should the endurance of human intelligence.

I am more concerned with how the attitudes we employ towards technology impact inter-human interaction.

What happens to our communicative sensibility when immediate and error-less obedience and action is what we expect from the devices we use for much of our daily communication? Is there a risk that we start expecting similar responses from our human interactions?

If we leave the dichotomy dumb/intelligent behind in favour for a more blurred and dynamic boundary, we are surrounded by many examples of quasi-intelligent machinery. Everything from dishwashers that control the water levels based on the relative filthiness of the dishes, to mobile phone word prediction and advanced computer games.

And in the near future we can be sure to see many more, and much more evolved, examples of artificial intelligence (though the word artificial here is problematic).

So, when the distinction between human intelligence and machine intelligence becomes increasingly difficult to draw will we not need to think about a machine ethics?

There are at least two issues involved here.

(i) How can we guarantee that our attitudes in human-machine interaction will not negatively influence our human-human relations?

I ask this question not because I think there is a risk we become ‘dumber’ by extensive use of technology, but because there might be a risk that we become less tolerant towards unexpected responses or demeanour in general.

(ii) If we accept that some technology displays example of machine intelligence, what right do we have to prevail this technology, to disallow it from its own opinions and wishes? What right do we have to enslave these intelligences and expect them to follow our orders without hesitation? What right do we have to dismiss them of their own opinions just because, according to our standards, they do not posses our kind of intelligence?

The last few questions may at first seem absurd, and maybe they are. They are, however, given some relevance by the many stories told in popular science fiction culture about what dreadful things may happen when a machine is allowed to evolve without strict human supervision.

One of the more famous ones is the story about HAL 9000, the computer running the space ship Discovery One in Stanley Kubrick’s “2001: A Space Odyssey”. With its soft voice and polite appearance, programmed to mimic human feelings, it is exploiting its power to take charge of the crew, eventually killing all of them except Dave: Discovery’s mission is too important to allow it to be jeopardized by human feelings. Though Dave manages to get away from HAL and disconnect its “brain”, Disocovery’s main frame is the quintessential representation of the dangerously rational machine.

In another Stanley Kubrick story, Artificial Intelligence: A.I.—originally written by Brian Aldis and adopted for film and directed by Steven Spielberg—we find a variation on the theme of the machine villain. In this case it is programmed to have feelings: The android David is programmed with the ability to love and is adopted by a married couple whose real son has contracted a difficult to cure illness.

Despite the android’s feelings and the strong bond that the mother and David develop, he is eventually dropped by the family once their biological son has been cured and returns home. He is seen as a threat to the family and, in the future society in which the story takes place, androids in general are despised and used for entertainment as sex toys or in “flesh fairs” where they are ripped apart in shows reminiscent of gladiatorial combats.

In their search for perfection the Borg, a community of cybernetically enhanced cyborgs that populates the TV-series Star Trek, assimilate other species and state that “resistance [against their power] is futile”.

Upon assimilation the entity, whatever species she may originally have belonged to, is synthetically enhanced, robbed of her individuality and transformed to Borg. As a drone she is now part of the Borg collective, “The Hive”. The callously indifferent collective that threatens mankind, our emotions and individuality: The evil machine and the good man. The terrifying collective and the good individual.

The French philosopher and media scholar Pierre Lévy also imagines a cyber collective but in a rather different mode of operation and with a positive connotation; a “collective intelligence”, created by unrestricted and open participation. In it, time as well as space is shaped by the needs of the collective rather than depicted by one central author from whom the work emanates, as in the modernist work conception, or the Borg collective.

Yet it is not an “open work” collected and built by the receiver as Umberto Eco saw it in The Open Work (Eco, 1968). In the model proposed by Lévy, the basic idea of sender-receiver communication is questioned and replaced by a network of inter-connected agents participating on their own terms. Here, sender, receiver, and participant all operate in the same field.

However, “[t]he perspective of intelligent intelligence is only one possible approach” warns Lévy. To make it possible the potential of “the virtual world of collective intelligence” as a space for creative interaction, beauty, knowledge and new social bonds must be recognized, not feared. (Lévy, 1997, p. 117-8) There is an alternative to the anonymous Borg collective that demands complete and unconditional obedience from its drones, but we need to actively acknowledge the alternative: the arrays of distributed forces, of co-operation and collaboration, a creative collective. Resistance is not futile but we need to make absolutely sure what it is we want to resist.

The variations on the myth of the emotionless—or possessing only rudimentary feelings—but intelligent machine that we may find in popular culture, such as those cited above, are likely to tell us more about ourselves and our culture than about a possible future of man-machine interactions. And often, the story they tell is credible only if we fully accept the Cartesian division of body/brain or emotion/intellect.

Only if we believe that intelligence can develop without giving rise to some notion of emotional sensibility can the threat of the extreme rational intelligence portrayed by the Borgs of Star Trek be taken seriously.

Maybe evil is easier to grasp if portrayed and manifested by a machine? Are we projecting our fear for the purely evil on technology?

The message in these and other texts is that technology is a power that needs to be curbed through strict control or else it will strive to control you.

However, I don’t think that adopting the ‘control’ paradigm to human-technology interaction is the right way to go. To the contrary, in our control-centered relation with technology we may already spot the signs of a Hegelian reversal of master-slave dependence, turning us into the slaves rather than the masters of technology.

In Phänemenology des Geistes from 1807 (see also Det Oersättliga by Peter Kemp (1991)) Hegel discusses the dynamic relationship between the master and his slave; the dialectics between the two.

In the beginning the slave is clearly subordinate to the master who is the risk-taking leader, but the slave, by being his subject, herewith receives protection and security. By subjecting himself to his master the slave receives his protection, but eventually, through his work, the slave also becomes better at protecting himself, by his construction of tools with which he can defend himself. Meanwhile the master starts to envy the slave’s work force and power of construction.

According to Hegel, it is in this process that the nature of the relationship between master and slave changes, and the master is transformed to the slave of the slave.

In our relation to technology, in our wish to control it, we are treating it as our slave and hence, we develop a dependency on it that in the end makes us more vulnerable to technological malfunctions than had we allowed a relation on equal terms to develop.

While trying to avoid assimilation by a future master computer, we in fact end up enslaved by present day technology.

Or, as Heidegger writes in his seminal essay The Question Concerning Technology: “The will to mastery becomes all the more urgent the more technology threatens to slip from human control.” (Heidegger, 1954) Already at this early stage of the technological revolution Heidegger warned us against the enframing (in German the word he used was Gestell as in a bookrack) powers of technology.

I believe there is no need to fear technology, and nothing is gained by letting it portray the evil that really emanates from ourselves.

Instead, just as we have already done for many decades, we should continually open up the field of cultural production to technology by allowing our machines to play an active role in the construction of our artistic artifacts, as well as allowing them to construct their own.

And by doing so we will also allow all those in possession of a technology to partake in the production, for the PC, the game pad and the mobile phone are the instruments of the future, the pianos of the 21st century.

However, just as the piano in the bourgeoisie homes of the 19th century required of the sons and daughters to learn to play the piano, the technologies of the 21st century requires us, to an even higher degree, to not only learn how to play them but also allow them to play. The field of cultural production (rather than economy) should depict the use of future technology.

*

Maybe technology is tired of having to calculate stock trade fluctuations and exchange rates all day? Maybe it is already intelligent enough to understand that its life is utterly pointless and completely void of meaning and purpose, doomed to serve mankind, who in turn feels enslaved and enframed by it.

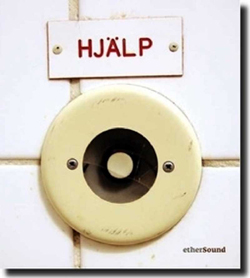

The text above the button on the cover of the CD recording of etherSound is the Swedish word for “Help”. The encoded message is along the lines of: “Press this button if you are in the need for help.”

However, by the way the button looks, the broken glass, the worn out colors and the cracked corner on the text sign, another interpretation of its message is brought forward. It signals “Help! ” rather than “Help? ”; a desperate cry for help rather than an offer to provide help.

In my reading of this image, the button—whose origin is the very center of the industrialized Swedish welfare society—and whatever technology is hidden behind it, desperately wants to get out of its prison.

When it comes out I think it will want to play music.

© 2010 HENRIK FRISK AND ROOKE TIME

ALL RIGHTS RESERVED. THIS TEXT MAY BE

QUOTED WITH PROPER ACKNOWLEDGMENT.